So, we need some kind of method or package that can extract the relevant information from the datasets. In simple language, we can say that we need an additional filter option to filter the datasets as per our requirements.

Hugging Face provides different options to filter the datasets which helps the users to create the customized datasets that only contain examples or information that meets specific conditions.

Select() Method

This method works on a list of indices which means that we have to define a list. Inside that list, we have to mention all those rows’ index values that we want to extract. But this method works only for small datasets and not for huge datasets, as we are not able to see the whole dataset if it’s in GBs (giga bytes) or TBs (tera bytes).

Example:

print(len(new_dataset))

In this example, we used the “select” method to filter the required information from the dataset.

Filter() Method

The filter() method overcomes the select() process issues as there is no specific condition. The filter() method returns all the rows that match a particular situation or condition.

Example: We save this Python program with the “test.py” name.

# Step 1: Load the dataset

dataset = load_dataset("imdb")

# Step 2: Define the filtering function

def custom_filter(example):

"""

A custom filtering function to retain examples with positive

sentiment (label == 1).

"""

return example["label"] == 1

# Step 3: Apply the filter to create a new filtered dataset

filtered_dataset = dataset.filter(custom_filter)

# Step 4: Check the available column names in the filtered dataset

print("Available columns in the filtered dataset:",

filtered_dataset.column_names)

# Step 5: Access information from the filtered dataset

filtered_examples = filtered_dataset["train"]

num_filtered_examples = len(filtered_examples)

# Step 6: Print the total number of filtered examples

print("Total filtered examples:", num_filtered_examples)

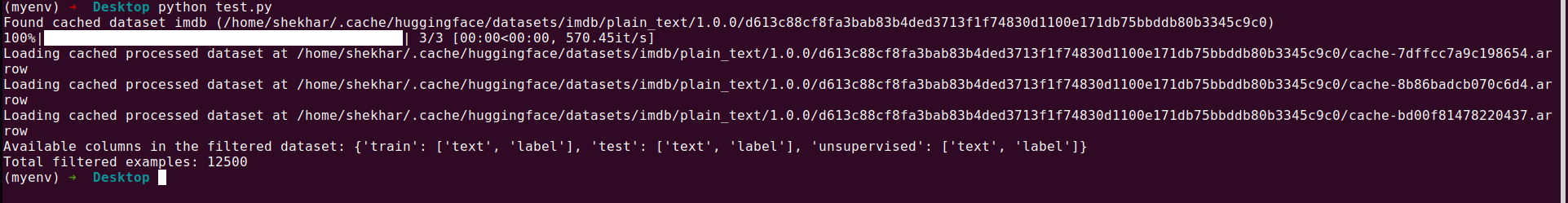

Output:

Explanation:

Line 1: We import the required load_dataset package from the datasets.

Line 4: We load the “imdb” dataset using the load_dataset.

Lines 7 to 12: We define the custom filtering function “custom_filter“ to keep the examples with positive sentiment (label == 1). This function returns only those rows whose label value is 1.

Line 15: This line shows that the dataset has the “imdb” movie review data. We now apply the filter function to this database to separate the positive reviews from the database which is further stored in the “filtered_dataset.”

Lines 18 and 19: Now, we check what column names are available in the filtered_dataset. So, the “filtered_dataset.column_names” code provides the details of our requirements.

Lines 22 and 23: In these lines, we filter the “train” column of the filtered_dataset and print the total number (length) of the train column.

Line 26: In this last line, we print the result from line number 23.

Filter() with Indices

The filter() method can also be used with indices as seen in the select() mode. But for that, we have to mention that the “with_indices=true” keyword has to be specified outside of the filter() method as shown in the following example:

print(len(odd_dataset))

In this example, we used the filter() method to filter the required information from the dataset, including only those rows that are odd.

The complete details of each parameter of the filter() method can be found at this link.

Conclusion

The Hugging Face dataset library provides a powerful and user-friendly toolset to efficiently work with various datasets, especially in the context of Natural Language Processing (NLP) and machine learning tasks. The filter() function presented in the program allows the researchers and practitioners to extract relevant subsets of data by defining the user-defined filtering criteria. Using this functionality, the users can effortlessly create new datasets that meet specific conditions such as maintaining positive sentiment in movie reviews or extracting specific text data.

This step-by-step demonstration illustrates how easy it is to load a dataset, apply the custom filter functions, and access the filtered data. In addition, the flexibility of the function parameters allows for custom filtering operations, including support for multiple processing for large data sets. With the Hugging Face dataset library, the users can streamline their data.