If you have an NVIDIA GPU installed on your Proxmox VE server, you can pass it to a Proxmox VE LXC container and use it in the container for CUDA/AI acceleration (i.e. TensorFlow, PyTorch). You can also use the NVIDIA GPU for media transcoding, video streaming, etc. in a Proxmox VE LXC container with the installed Plex Media Server or NextCloud (for example).

In this article, we will show you how to passthrough an NVIDIA GPU to a Proxmox VE 8 LXC container so that you can use it for CUDA/AI acceleration, media transcoding, or other tasks that require an NVIDIA GPU.

Topic of Contents:

- Installing the NVIDIA GPU Drivers on Proxmox VE 8

- Making Sure the NVIDIA GPU Kernel Modules Are Loaded in Proxmox VE 8 Automatically

- Creating a Proxmox VE 8 LXC Container for NVIDIA GPU Passthrough

- Configuring an LXC Container for NVIDIA GPU Passthrough on Promox VE 8

- Installing the NVIDIA GPU Drivers on the Proxmox VE 8 LXC Container

- Installing NVIDIA CUDA and cuDNN on the Proxmox VE 8 LXC Container

- Checking If the NVIDIA CUDA Acceleration Is Working on the Proxmox VE 8 LXC Container

- Conclusion

- References

Installing the NVIDIA GPU Drivers on Proxmox VE 8

To passthrough an NVIDIA GPU to a Proxmox VE LXC container, you must have the NVIDIA GPU drivers installed on your Proxmox VE 8 server. If you need any assistance in installing the latest version of the official NVIDIA GPU drivers on your Proxmox VE 8 server, read this article.

Making Sure the NVIDIA GPU Kernel Modules Are Loaded in Proxmox VE 8 Automatically

Once you have the NVIDIA GPU drivers installed on your Proxmox VE 8 server, you must make sure that the NVIDIA GPU kernel modules are loaded automatically at boot time.

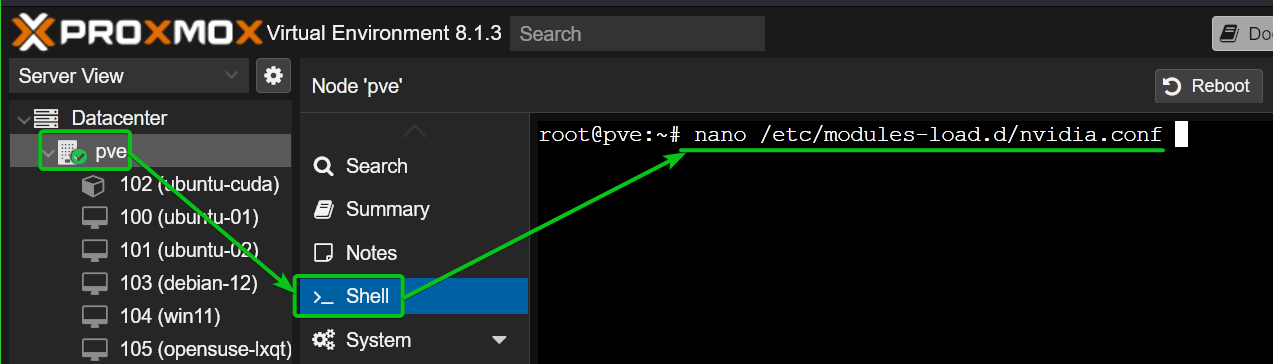

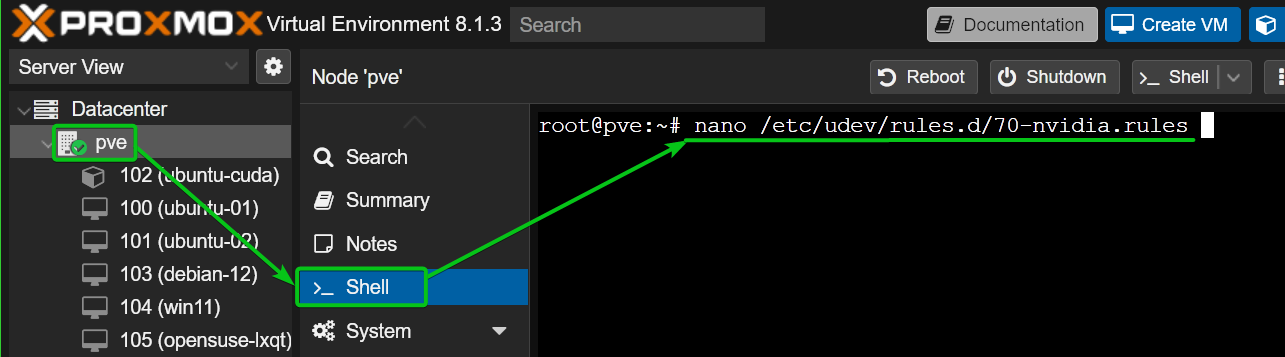

First, create a new file like “nvidia.conf” in the “/etc/modules-load.d/” directory and open it with the nano text editor.

Add the following lines and press <Ctrl> + X followed by “Y” and <Enter> to save the “nvidia.conf” file:

nvidia_uvm

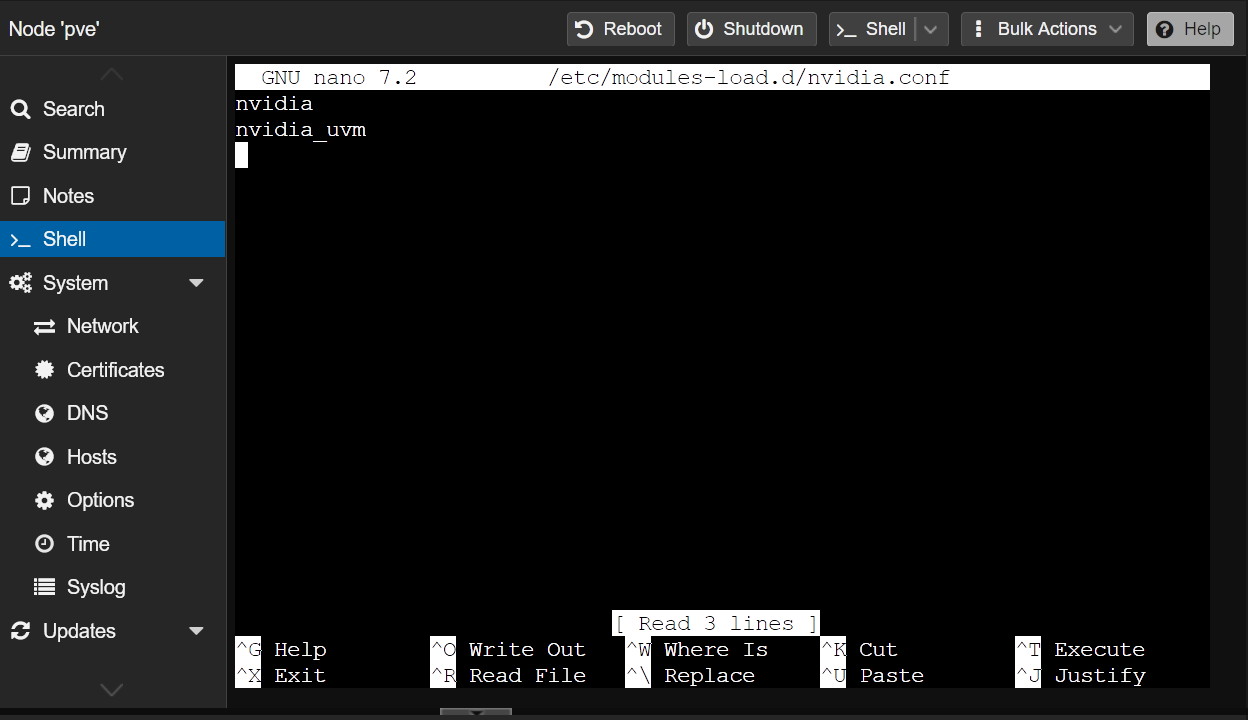

For the changes to take effect, update the “initramfs” file with the following command:

For some reason, Proxmox VE 8 does not create the required NVIDIA GPU device files in the “/dev/” directory. Without those device files, the Promox VE 8 LXC containers won’t be able to use the NVIDIA GPU.

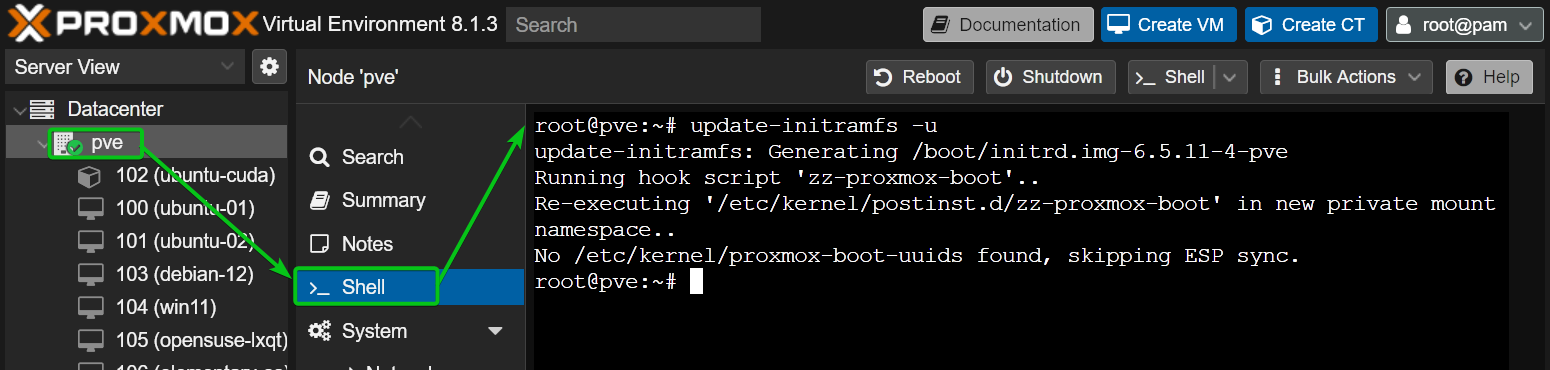

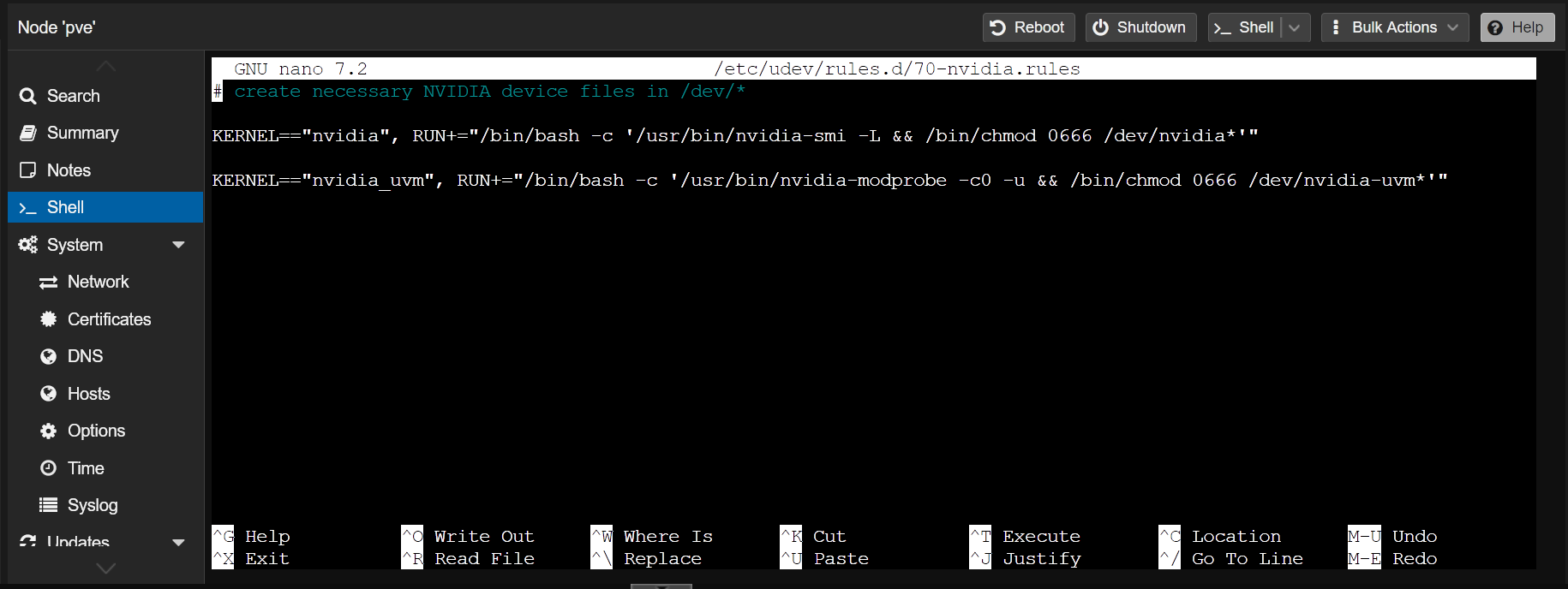

To make sure that Proxmox VE 8 creates the NVIDIA GPU device files in the “/dev/” directory at boot time, create a udev rules file “70-nvidia.rules” in the “/etc/udev/rules.d/” directory and open it with the nano text editor as follows:

Type in the following lines in the “70-nvidia.rules” file and press <Ctrl> + X followed by “Y” and <Enter> to save the file:

KERNEL=="nvidia", RUN+="/bin/bash -c '/usr/bin/nvidia-smi -L && /bin/chmod 0666 /dev/nvidia*'"

KERNEL=="nvidia_uvm", RUN+="/bin/bash -c '/usr/bin/nvidia-modprobe -c0 -u && /bin/chmod 0666 /dev/nvidia-uvm*'"

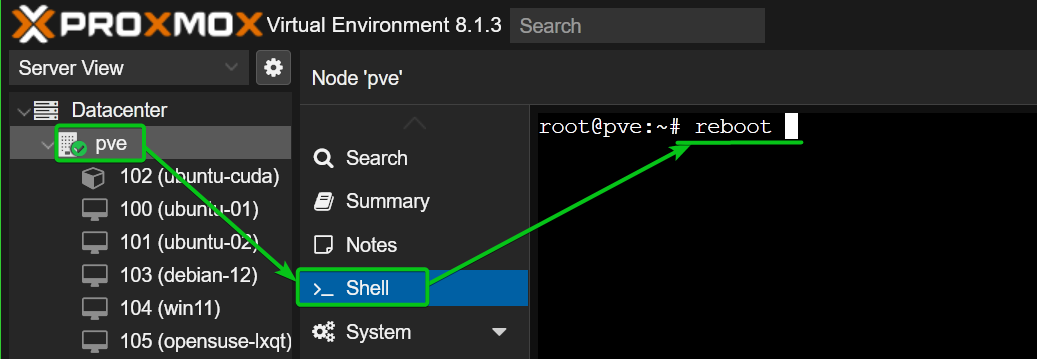

For the changes to take effect, reboot your Proxmox VE 8 server as follows:

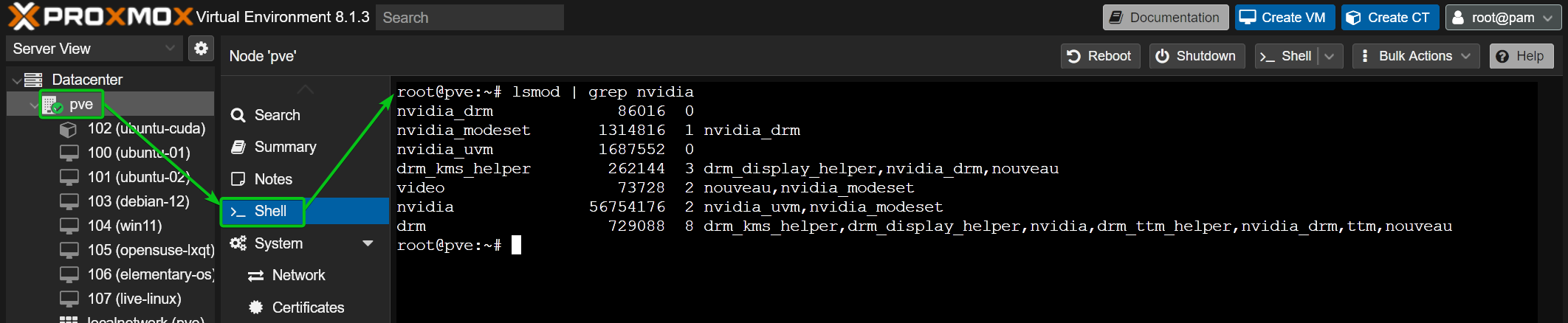

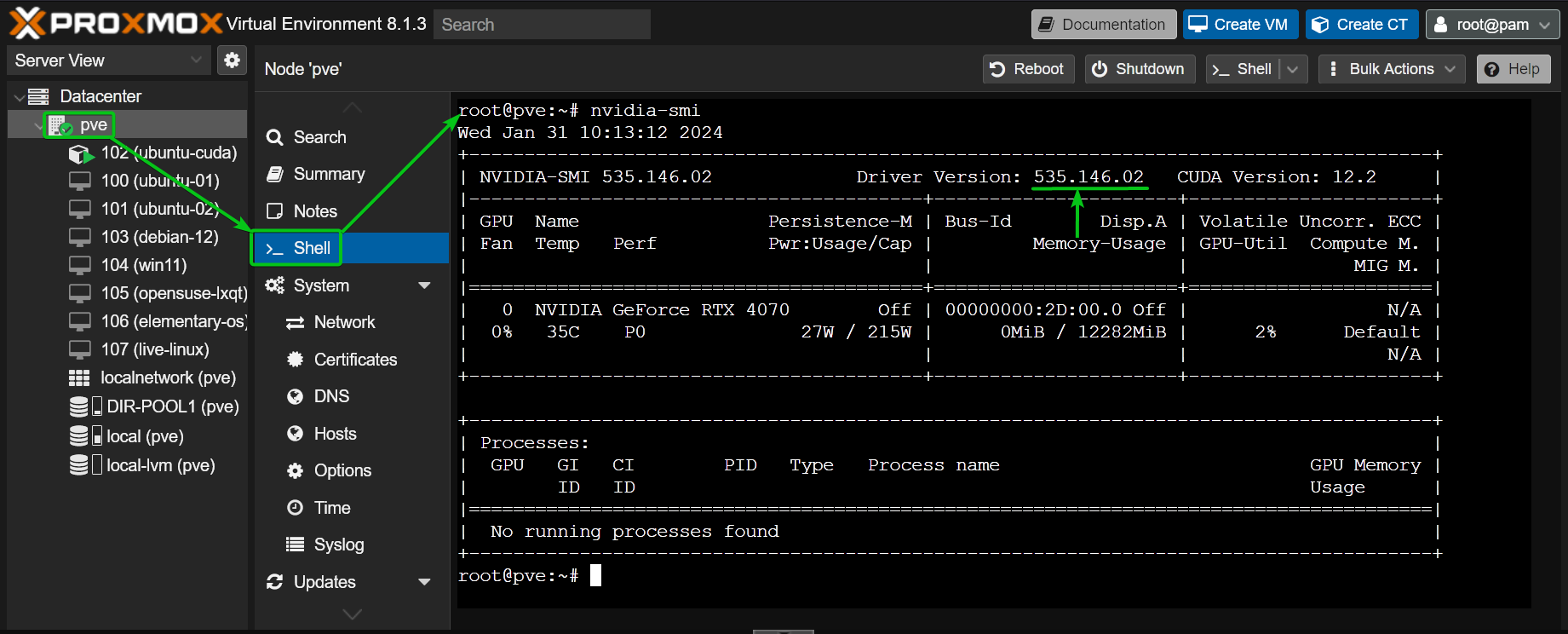

Once your Proxmox VE 8 server boots, the NVIDIA kernel modules should be loaded automatically as you can see in the following screenshot:

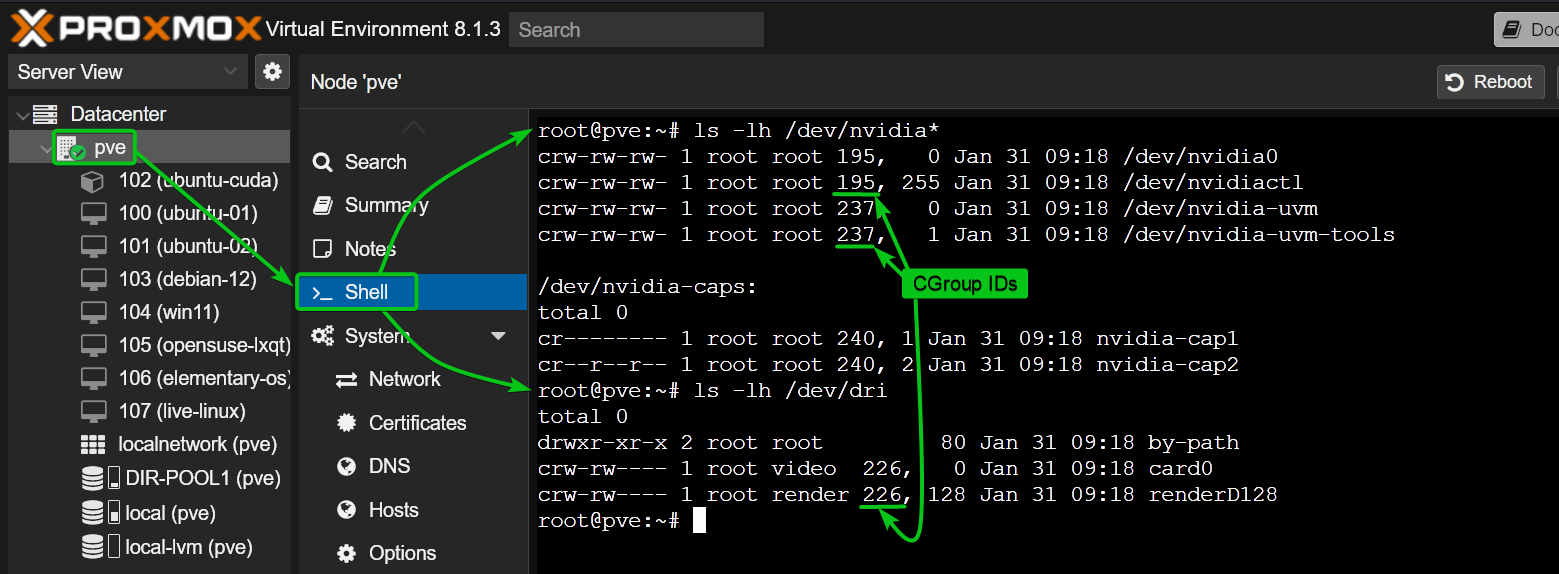

The required NVIDIA device files should also be populated in the “/dev” directory of your Proxmox VE 8 server. Note the CGroup IDs of the NVIDIA device files. You must allow those CGroup IDs on the LXC container where you want to passthrough the NVIDIA GPUs from your Proxmox VE 8 server. In our case, the CGroup IDs are 195, 237, and 226.

$ ls -lh /dev/dri

Creating a Proxmox VE 8 LXC Container for NVIDIA GPU Passthrough

We used an Ubuntu 22.04 LTS Proxmox VE 8 LXC container in this article for the demonstration since the NVIDIA CUDA and NVIDIA cuDNN libraries are easy to install on Ubuntu 22.04 LTS from the Ubuntu package repositories and it’s easier to test if the NVIDIA CUDA acceleration is working. If you want, you can use other Linux distributions as well. In that case, the NVIDIA CUDA and NVIDIA cuDNN installation commands will vary. Make sure to follow the NVIDIA CUDA and NVIDIA cuDNN installation instructions for your desired Linux distribution.

If you need any assistance in creating a Proxmox VE 8 LXC container, read this article.

Configuring an LXC Container for NVIDIA GPU Passthrough on Promox VE 8

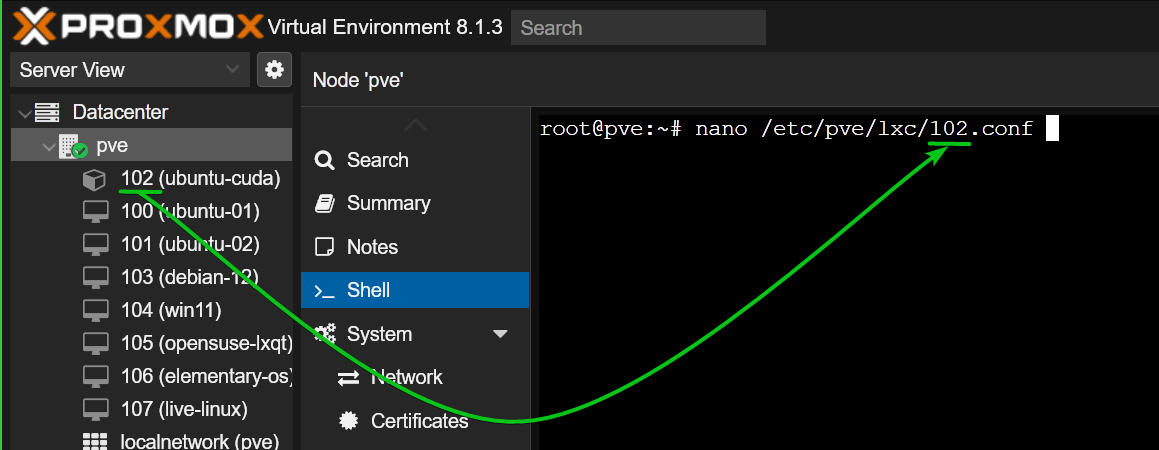

To configure an LXC container (container 102, let’s say) for NVIDIA GPU passthrough, open the LXC container configuration file from the Proxmox VE shell with the nano text editor as follows:

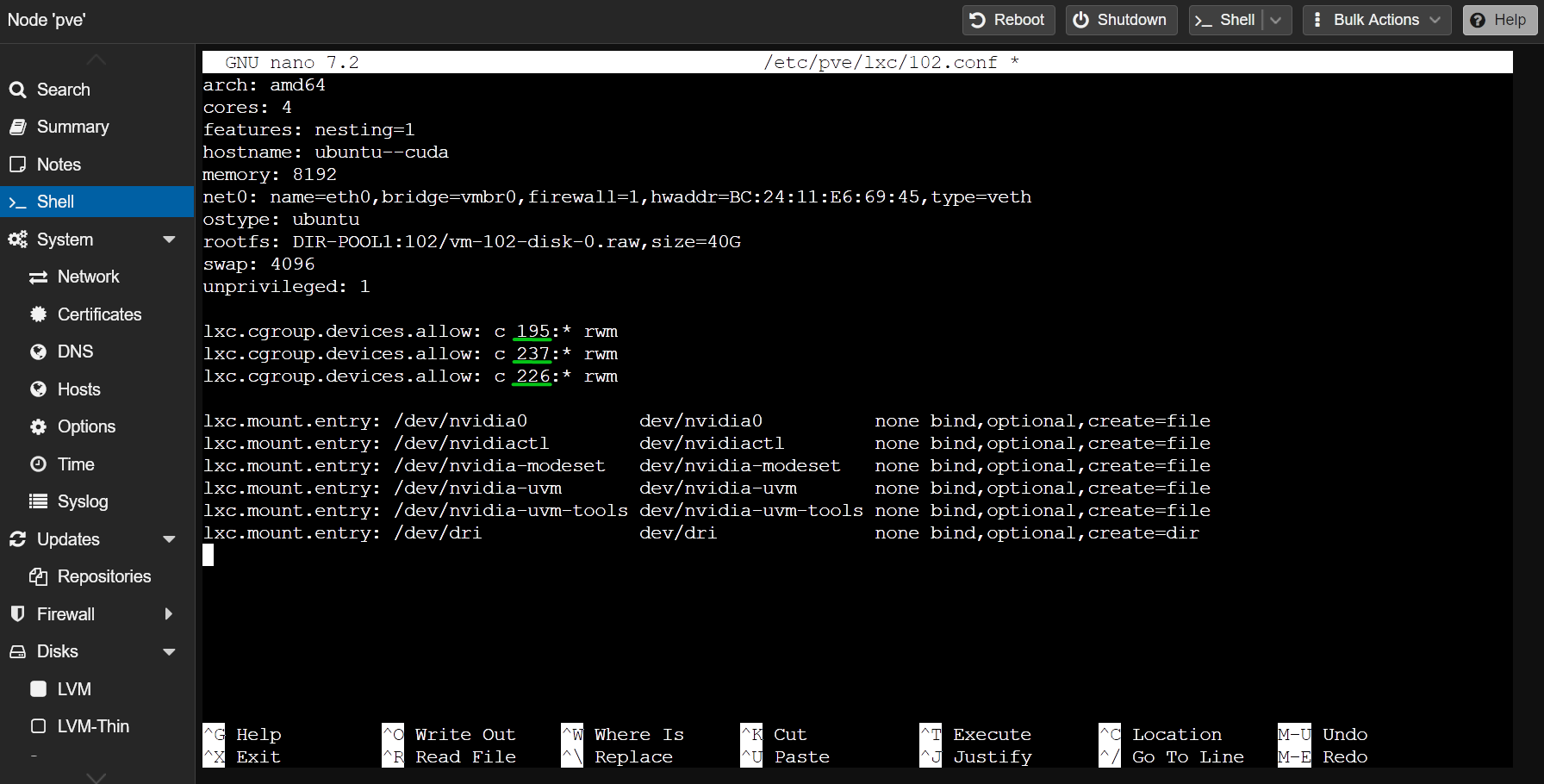

Type in the following lines at the end of the LXC container configuration file:

lxc.cgroup.devices.allow: c 237:* rwm

lxc.cgroup.devices.allow: c 226:* rwm

lxc.mount.entry: /dev/nvidia0 dev/nvidia0 none bind,optional,create=file

lxc.mount.entry: /dev/nvidiactl dev/nvidiactl none bind,optional,create=file

lxc.mount.entry: /dev/nvidia-modeset dev/nvidia-modeset none bind,optional,create=file

lxc.mount.entry: /dev/nvidia-uvm dev/nvidia-uvm none bind,optional,create=file

lxc.mount.entry: /dev/nvidia-uvm-tools dev/nvidia-uvm-tools none bind,optional,create=file

lxc.mount.entry: /dev/dri dev/dri none bind,optional,create=dir

Make sure to replace the CGroup IDs in the “lxc.cgroup.devices.allow” lines of the LXC container configuration file. Once you’re done, press <Ctrl> + X followed by “Y” and <Enter> to save the LXC container configuration file.

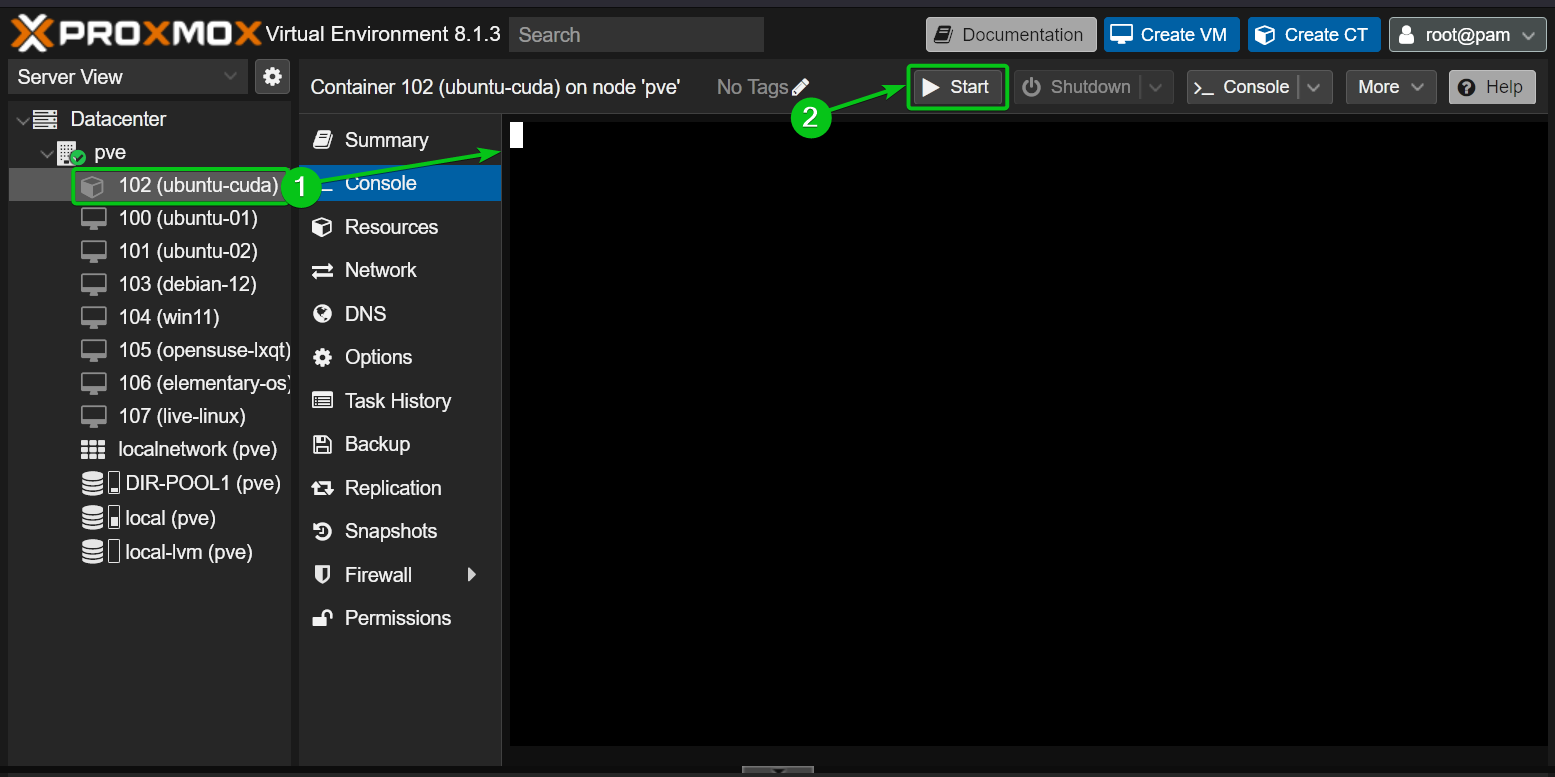

Now, start the LXC container from the Proxmox VE 8 dashboard.

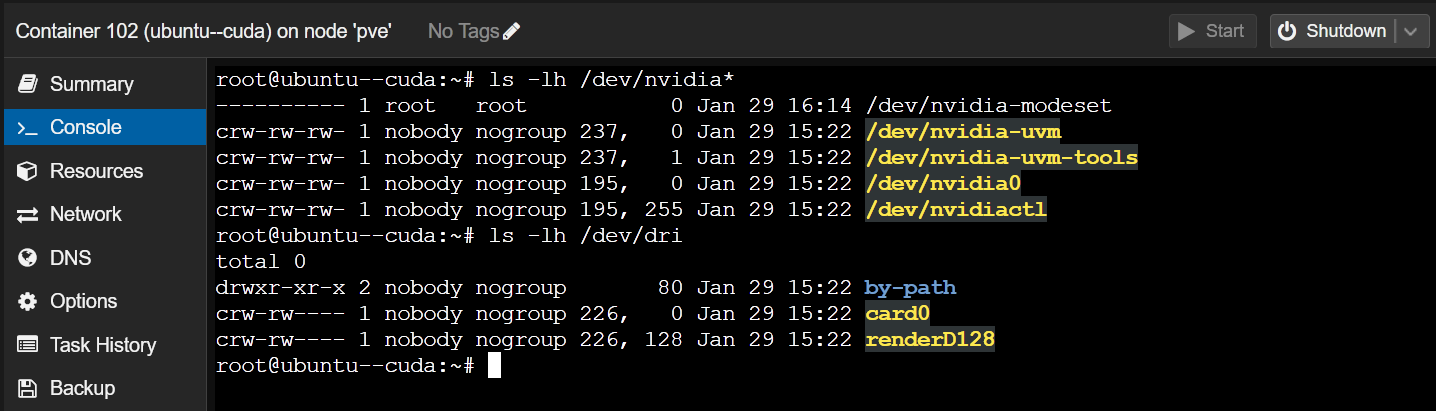

If the NVIDIA GPU passthrough is successful, the LXC container should start without any error and you should see the NVIDIA device files in the “/dev” directory of the container.

$ ls -lh /dev/dri

Installing the NVIDIA GPU Drivers on the Proxmox VE 8 LXC Container

NOTE: We are using an Ubuntu 22.04 LTS LXC container on our Proxmox VE 8 server for demonstration. If you’re using another Linux distribution on the LXC container, your commands will slightly vary from ours. So, make sure to adjust the commands depending on the Linux distribution you’re using on the container.

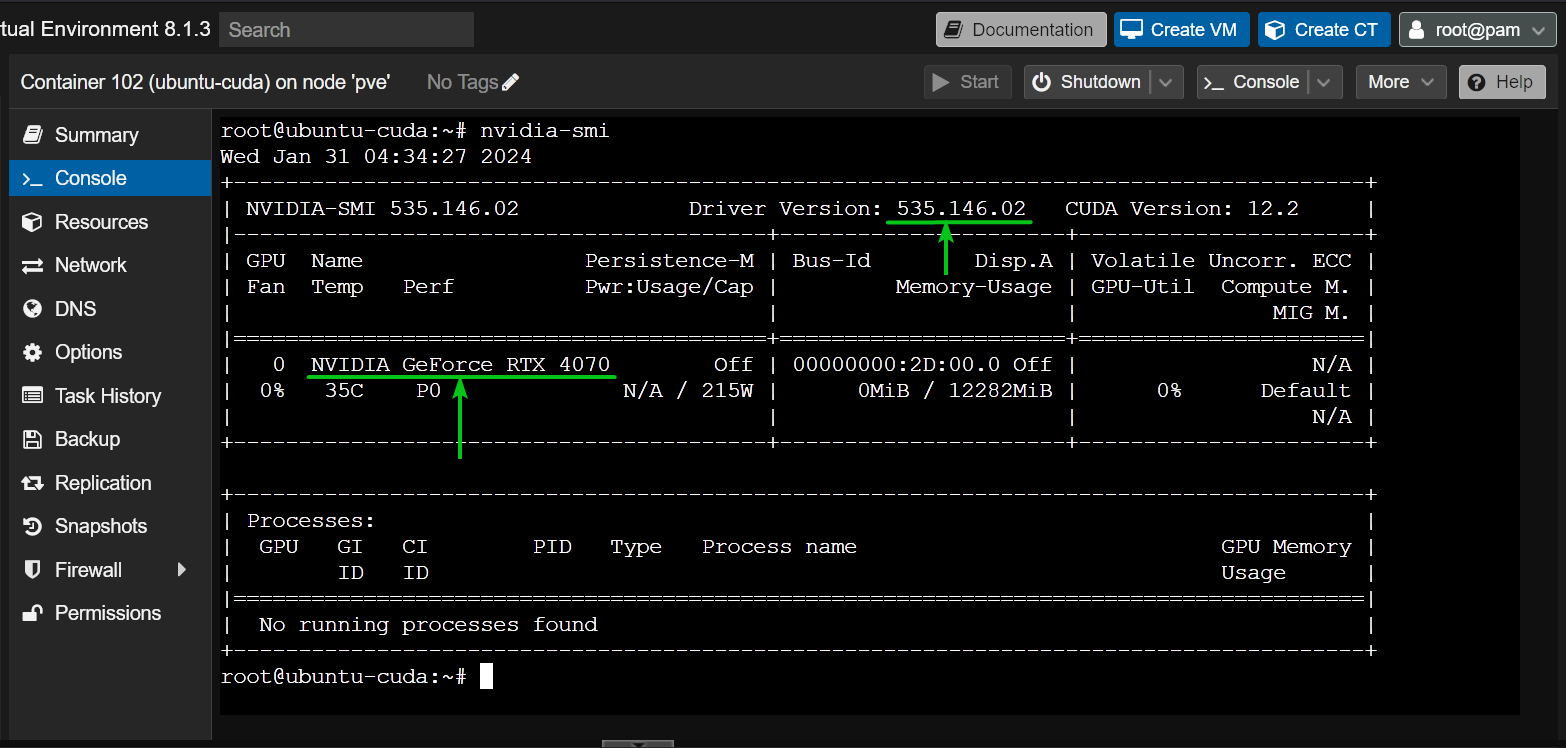

You can find the NVIDIA GPU drivers version that you installed on your Proxmox VE 8 server with the “nvidia-smi” command. As you can see, we have the NVIDIA GPU drivers version 535.146.02 installed on our Proxmox VE 8 server. So, we must install the NVIDIA GPU drivers version 535.146.02 on our LXC container as well.

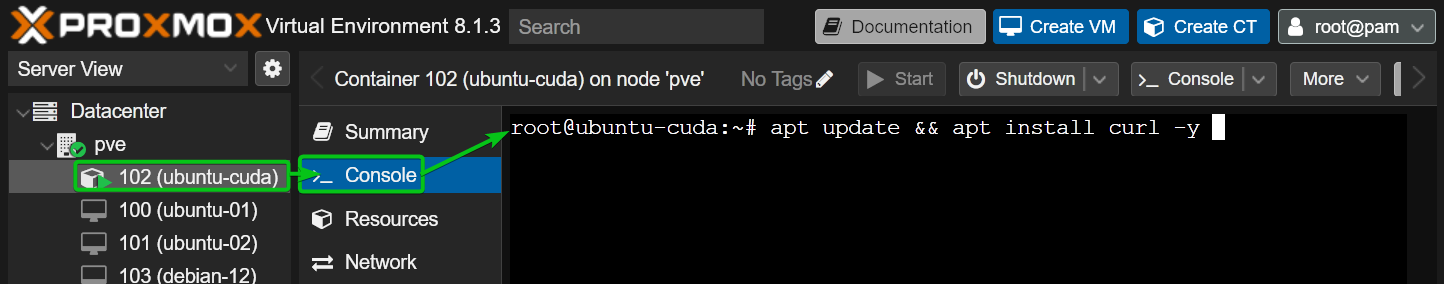

First, install CURL on the LXC container as follows:

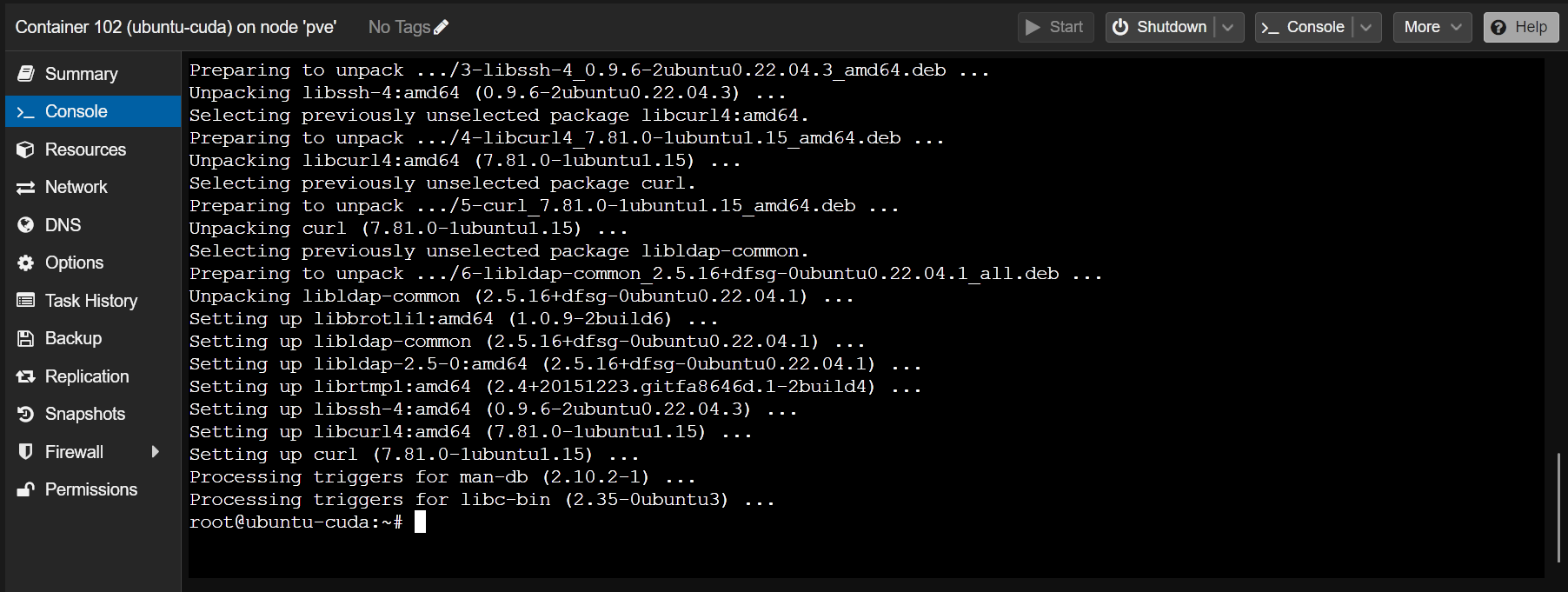

CURL should be installed on the LXC container.

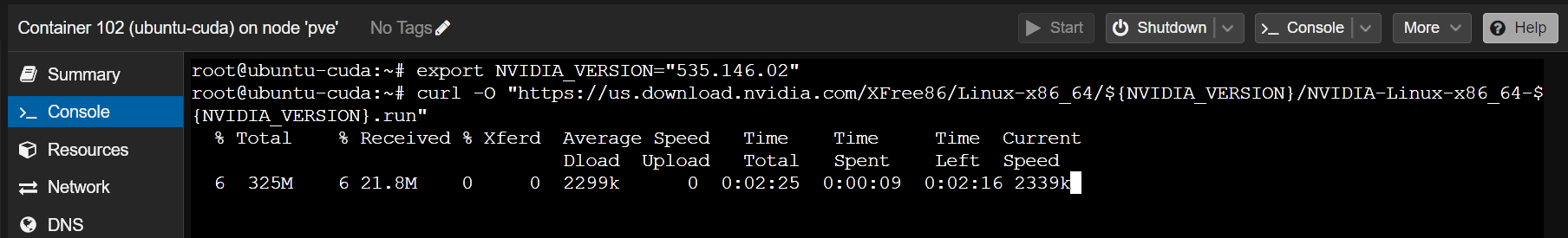

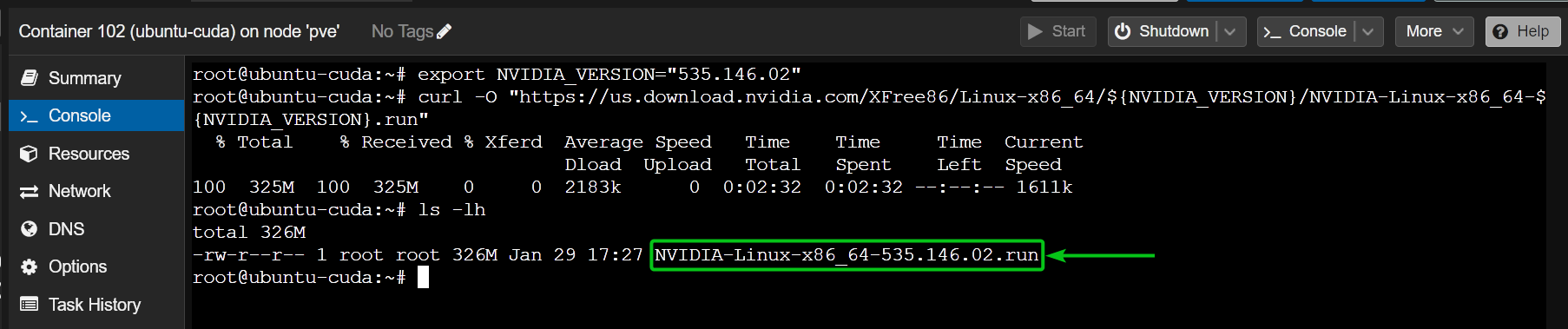

To install the NVIDIA GPU drivers version 535.146.02 (let’s say), export the NVIDIA_VERSION environment variable and run the CURL command (on the container) to download the required version of the NVIDIA GPU drivers installer file.

$ curl -O "https://us.download.nvidia.com/XFree86/Linux-x86_64/${NVIDIA_VERSION}/NVIDIA-Linux-x86_64-${NVIDIA_VERSION}.run"

The correct version of the NVIDIA GPU drivers installer file should be downloaded on the LXC container as you can see in the following screenshot:

Now, add an executable permission to the NVIDIA GPU drivers installer file on the container as follows:

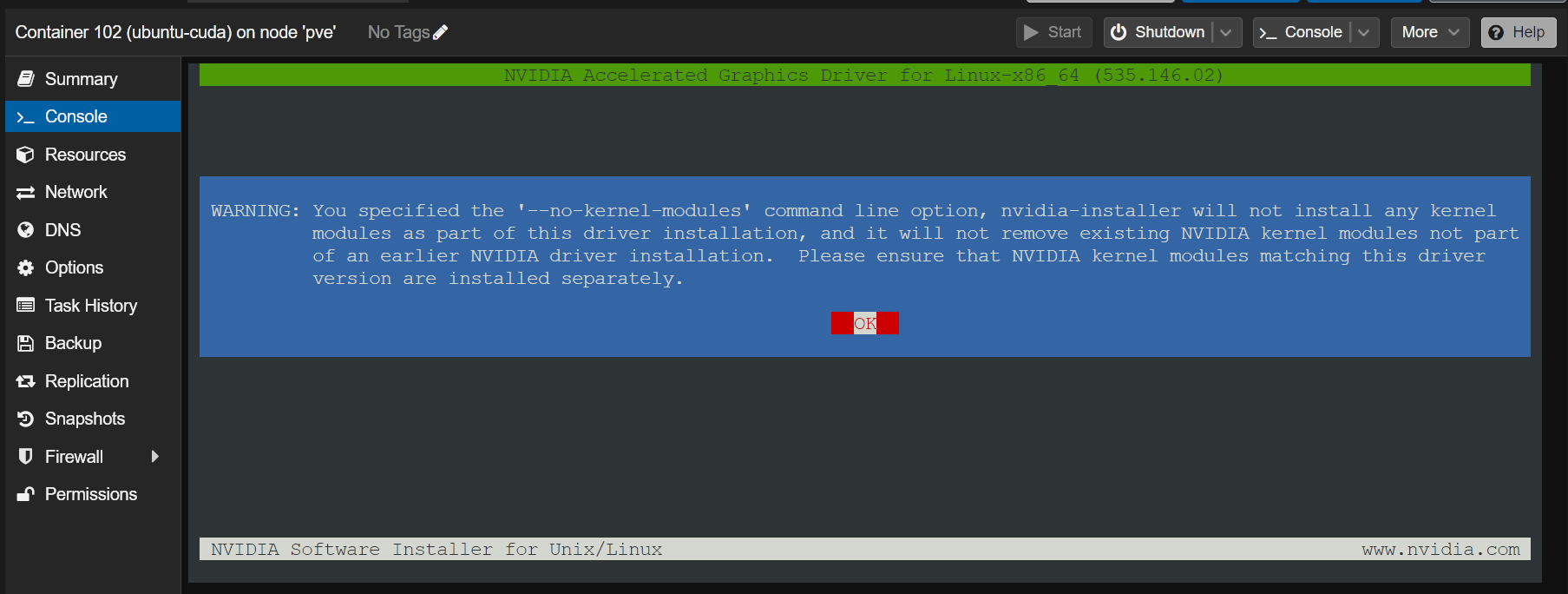

To install the NVIDIA GPU drivers on the container, run the NVIDIA GPU drivers installer file with the “–no-kernel-module” option as follows:

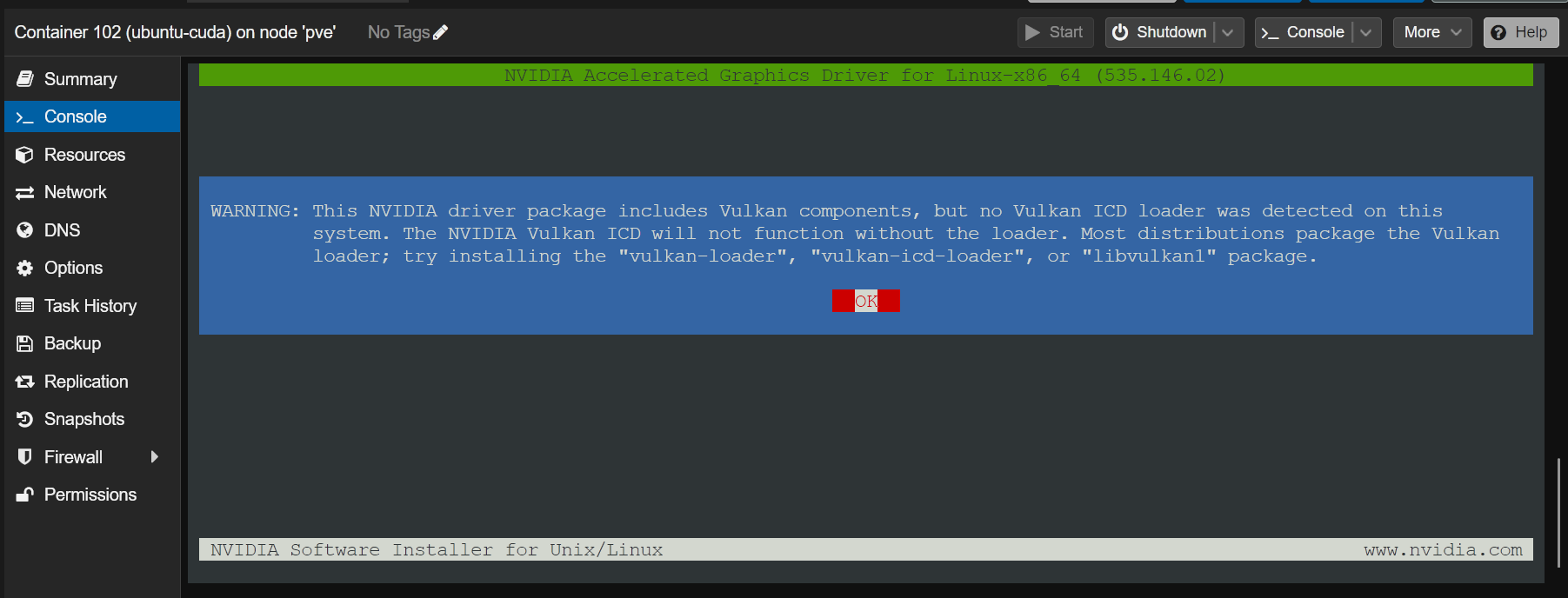

Once you see this option, select “OK” and press <Enter>.

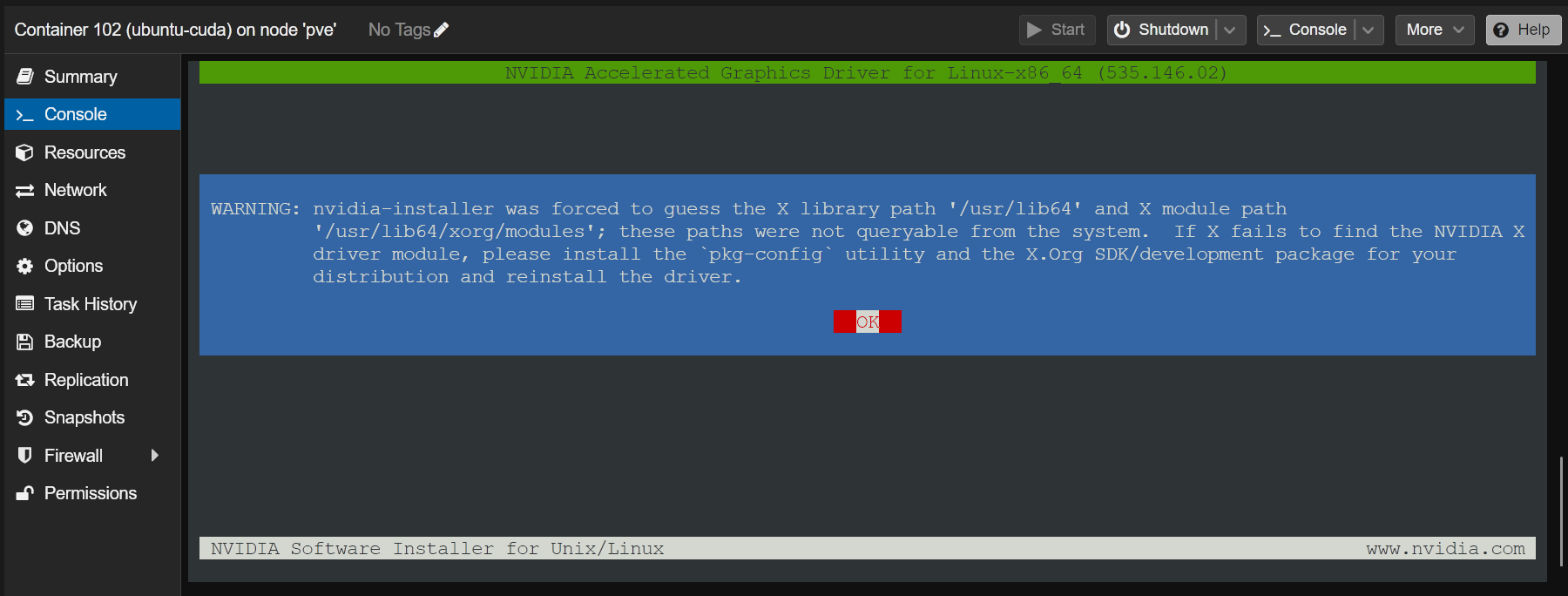

Select “OK” and press <Enter>.

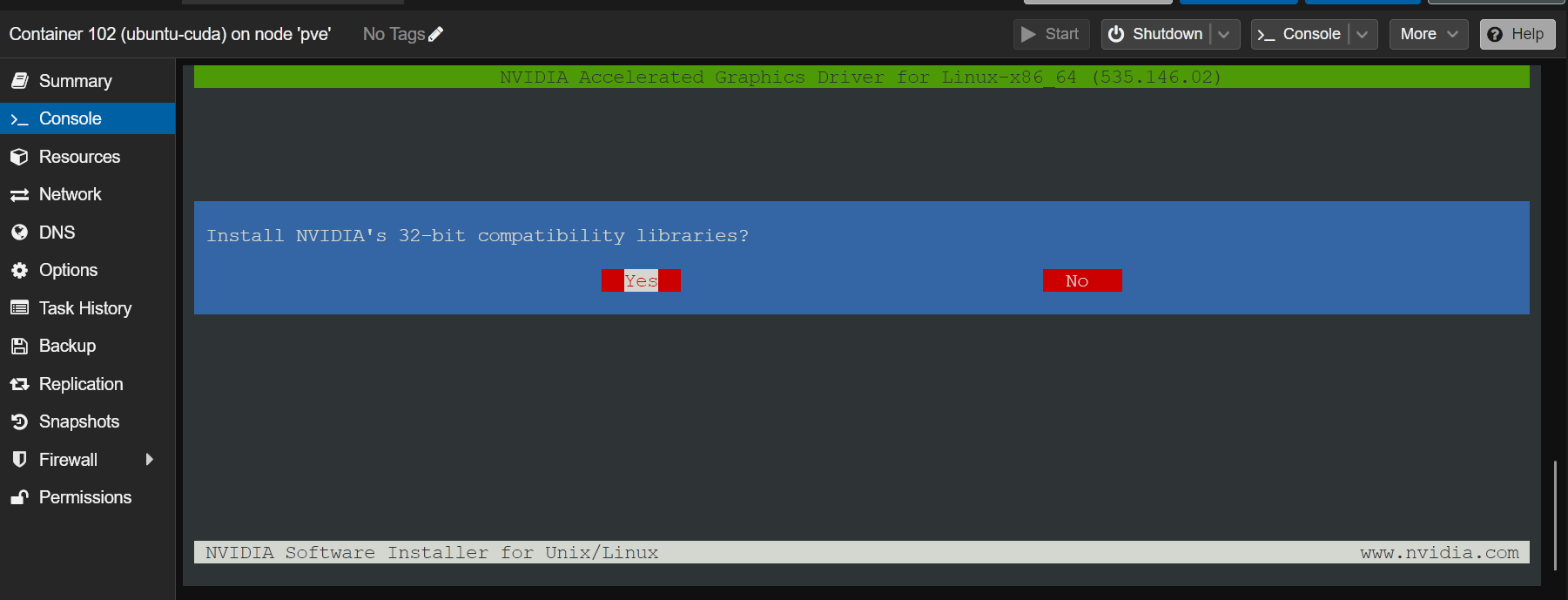

Select “Yes” and press <Enter>.

Select “OK” and press <Enter>.

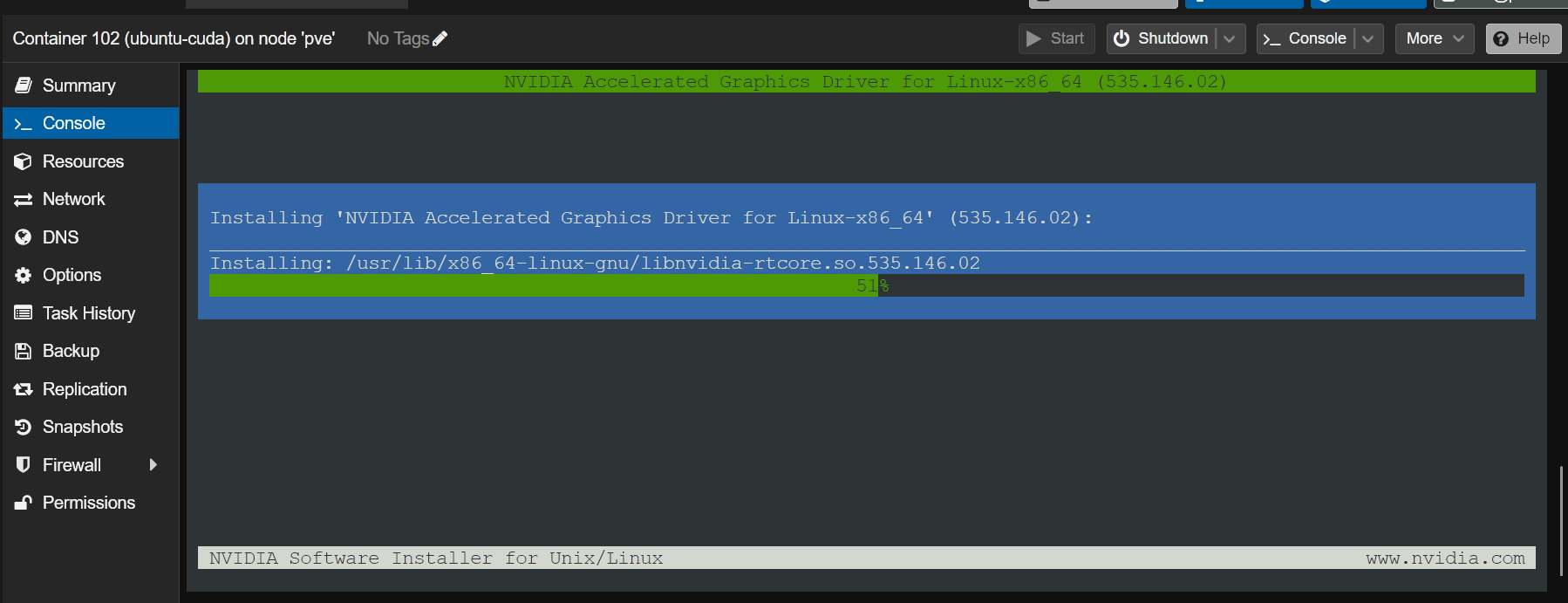

The NVIDIA GPU drivers are being installed on the LXC container. It takes a few seconds to complete.

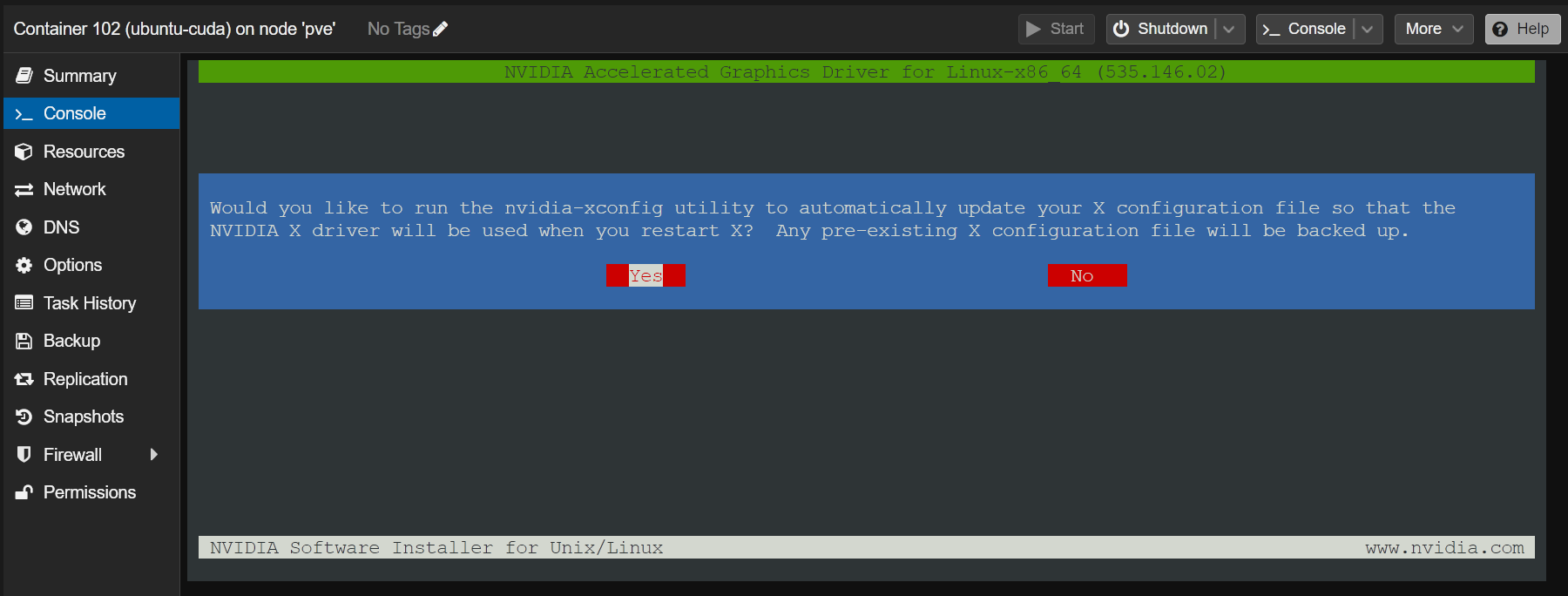

Once you see this prompt, select “Yes” and press <Enter>.

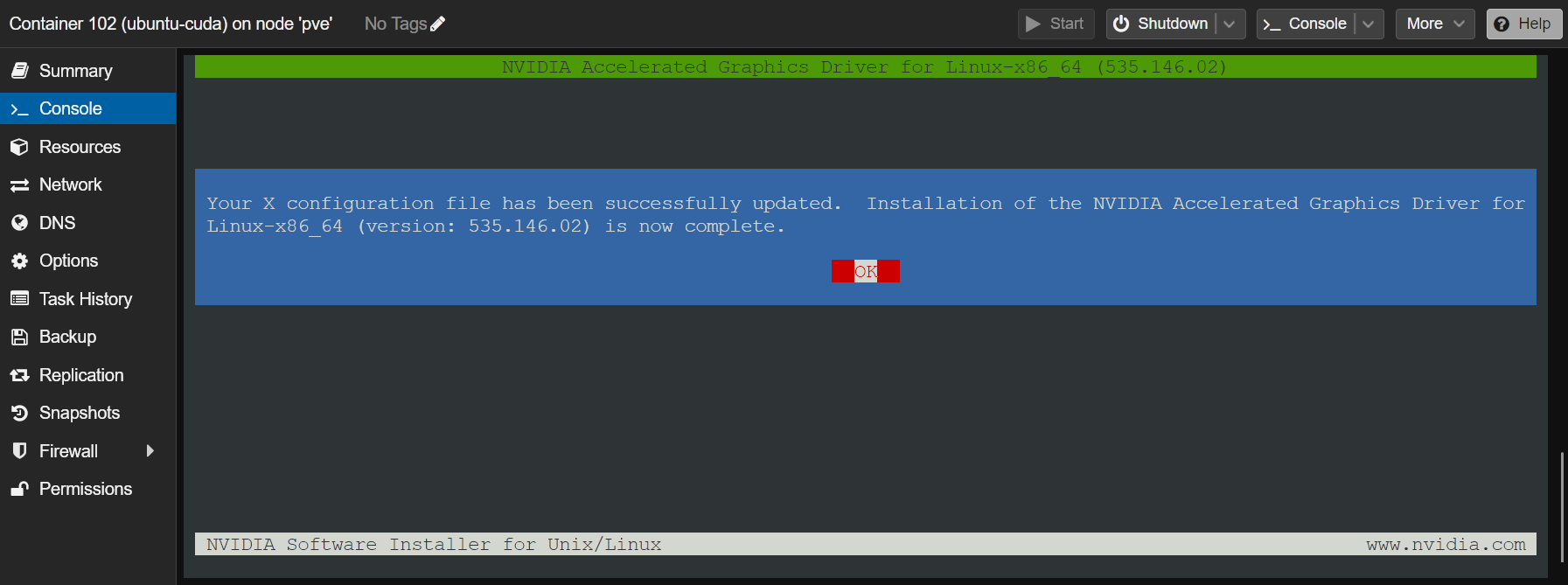

Select “OK” and press <Enter>. The NVIDIA GPU drivers should be installed on the LXC container.

To confirm whether the NVIDIA GPU drivers are installed and working, run the “nvidia-smi” command on the LXC container. As you can see, the NVIDIA GPU driver version 535.146.02 (the same version as installed on the Proxmox VE 8 server) is installed on the LXC container and it detected our NVIDIA RTX 4070 GPU correctly.

Installing NVIDIA CUDA and cuDNN on the Proxmox VE 8 LXC Container

NOTE: We are using an Ubuntu 22.04 LTS LXC container on our Proxmox VE 8 server for demonstration. If you’re using another Linux distribution on the LXC container, your commands will slightly vary from ours. So, make sure to adjust the commands depending on the Linux distribution you’re using on the container.

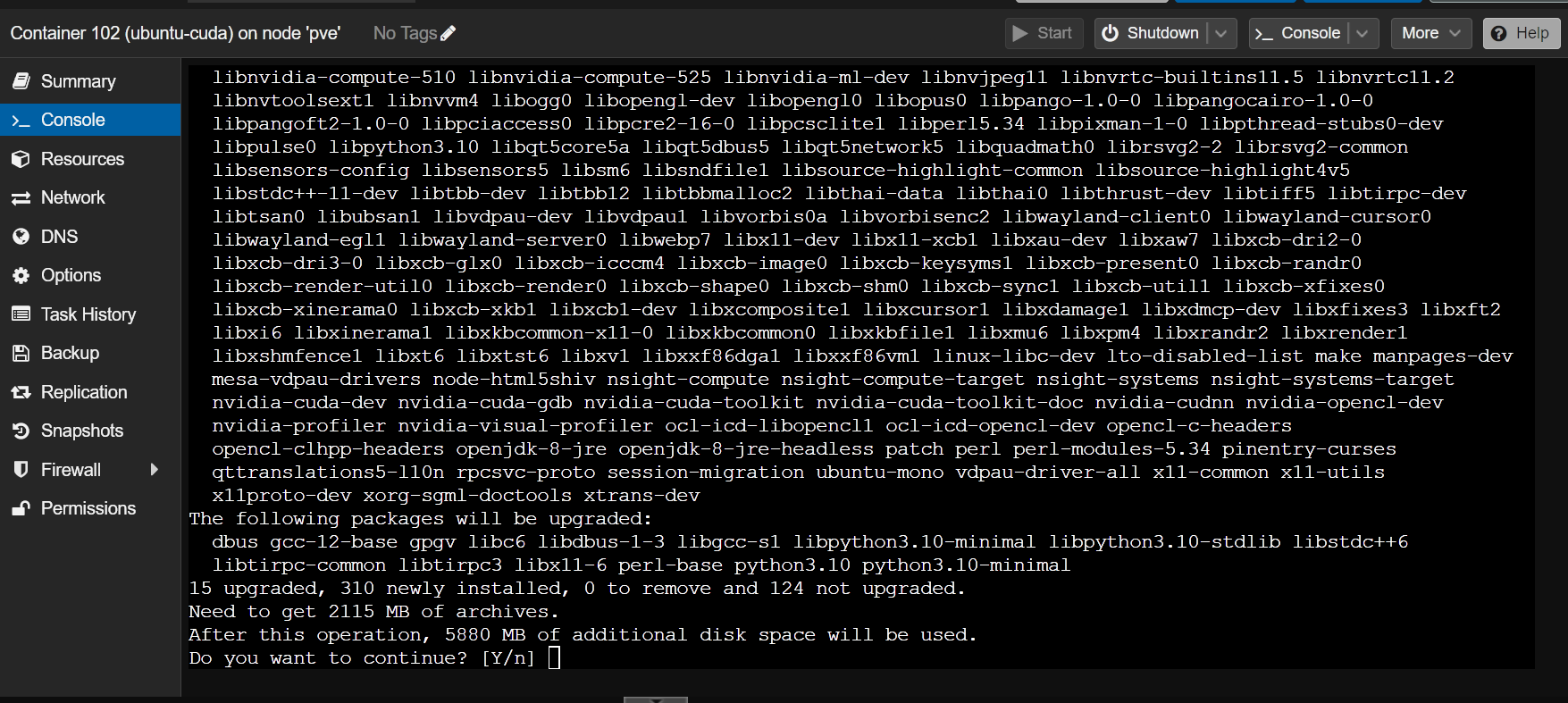

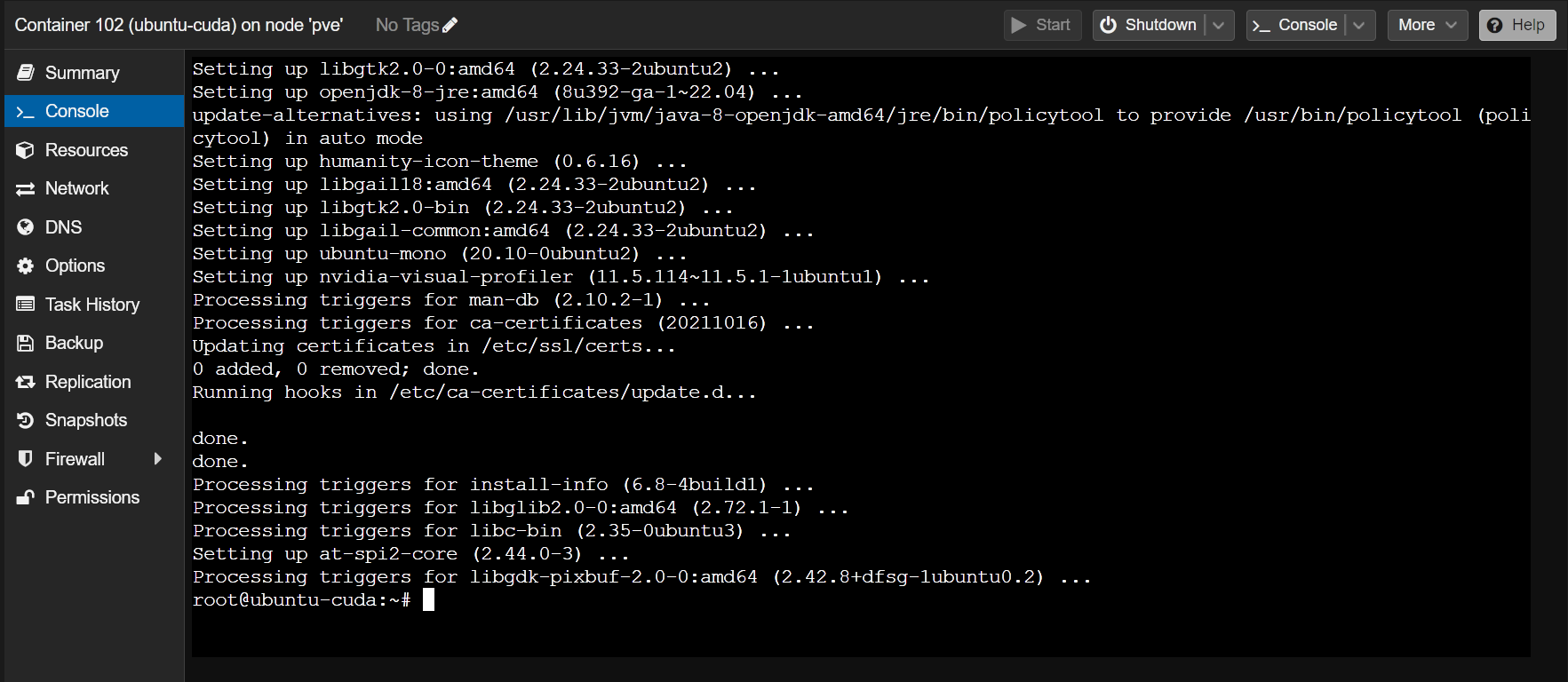

To install NVIDIA CUDA and cuDNN on the Ubuntu 22.04 LTS Proxmox VE 8 container, run the following command on the container:

To confirm the installation, press “Y” and then press <Enter>.

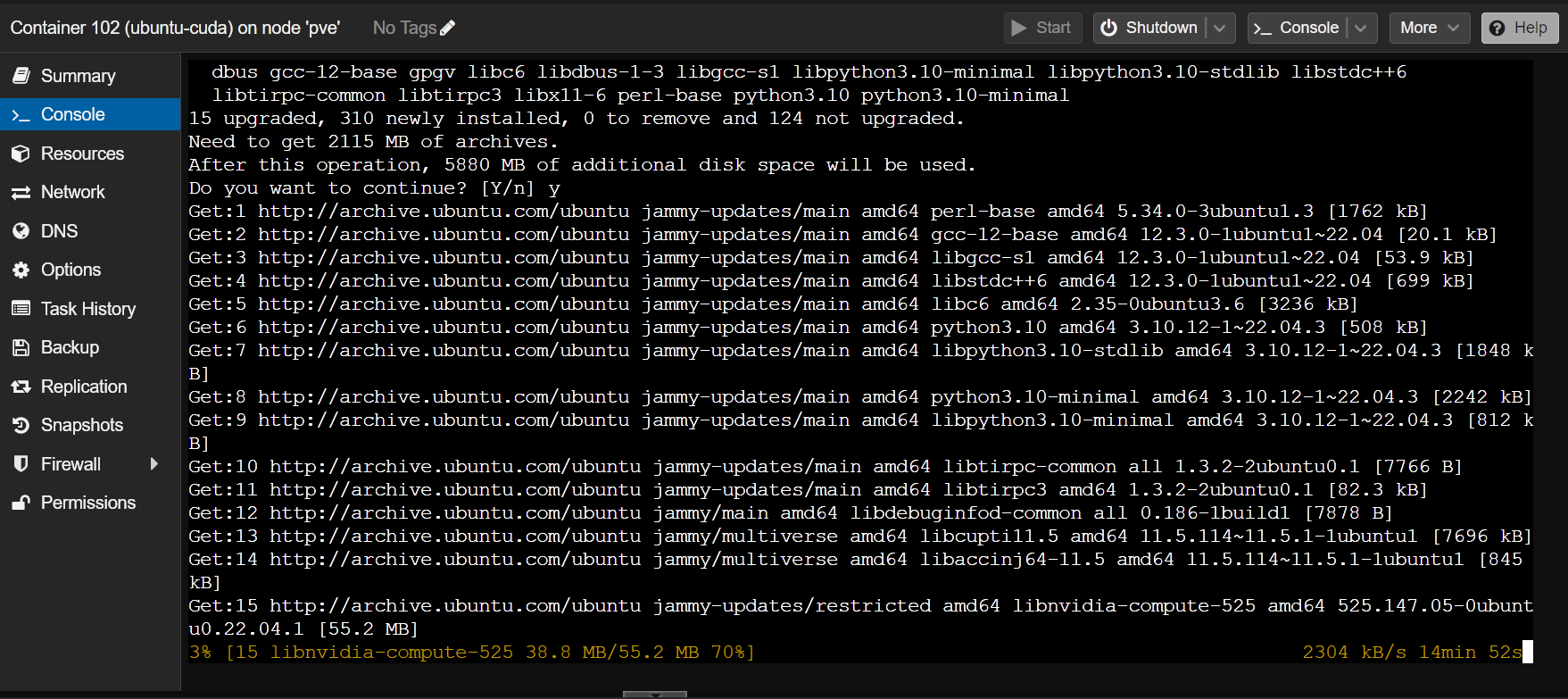

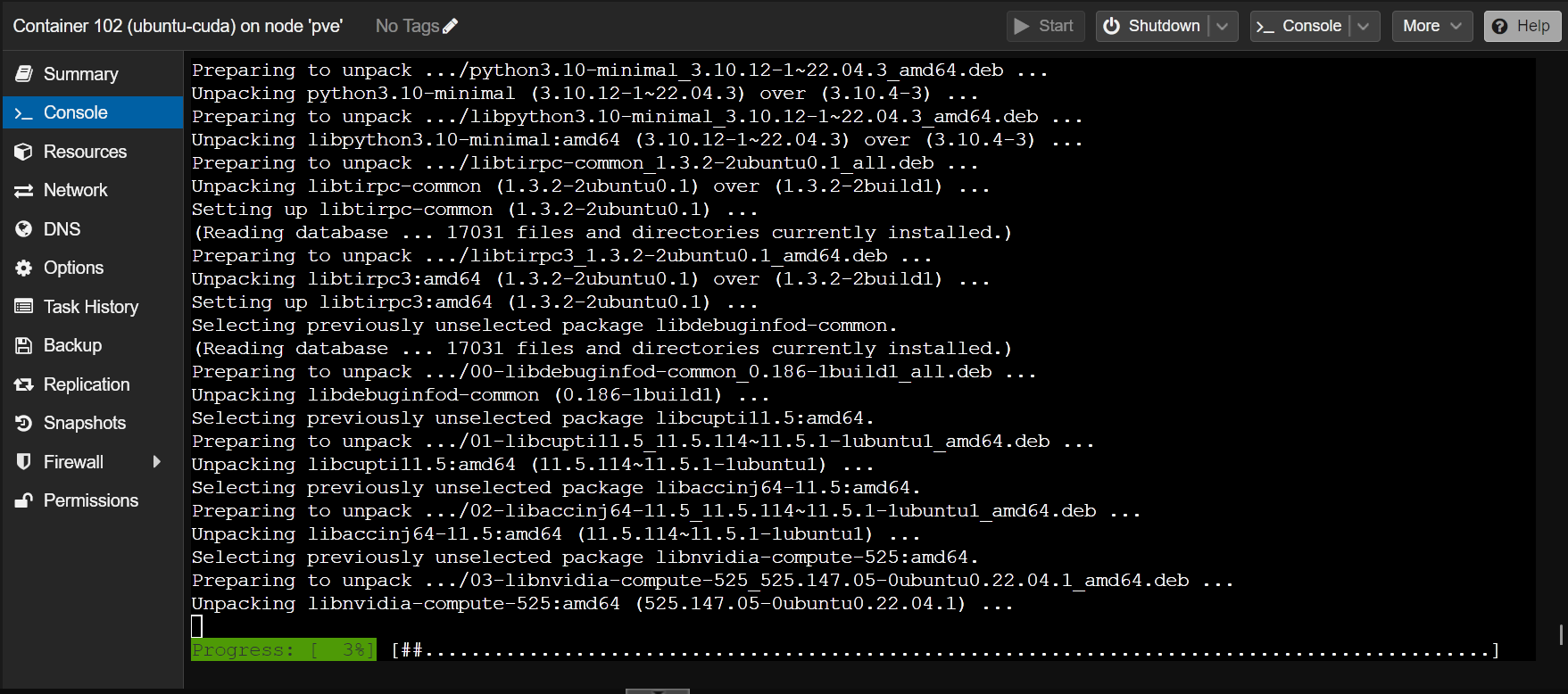

The required packages are being downloaded and installed. It takes a while to complete.

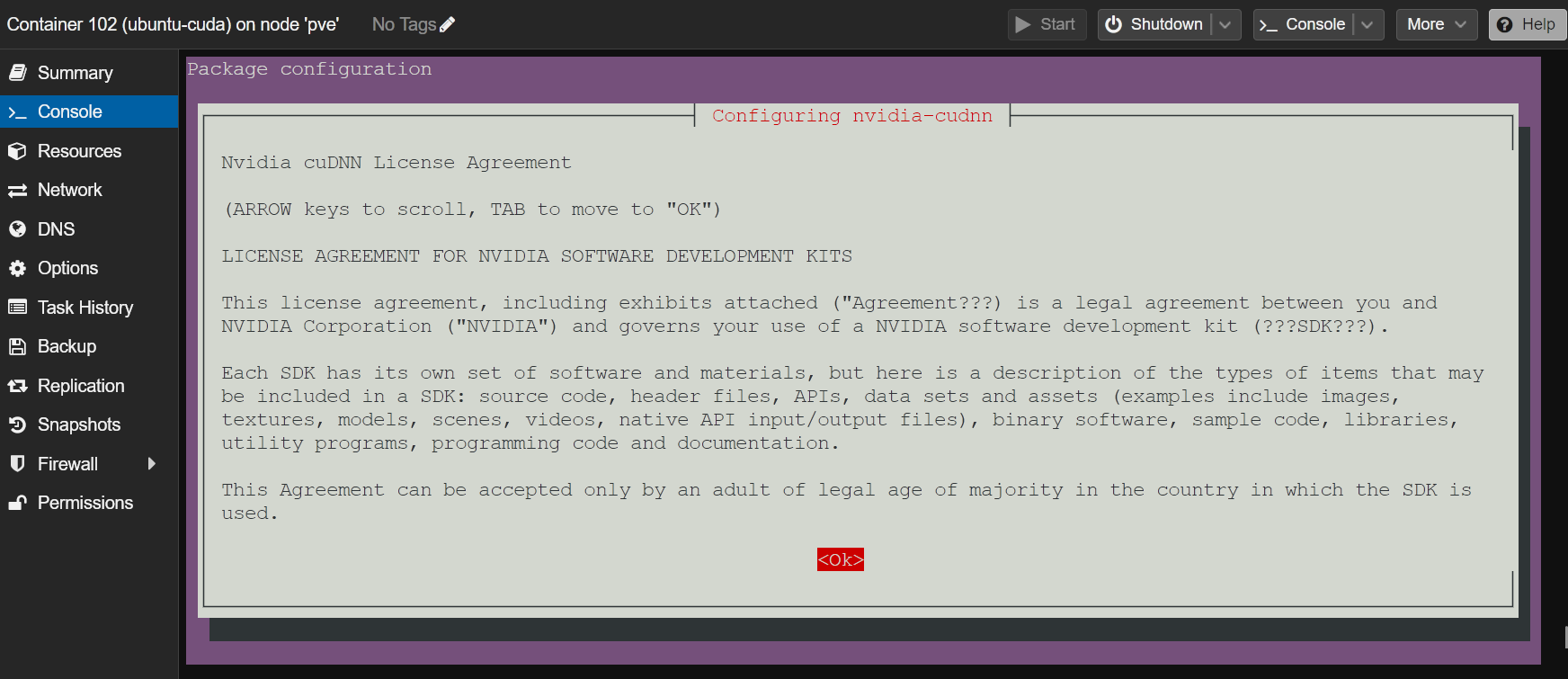

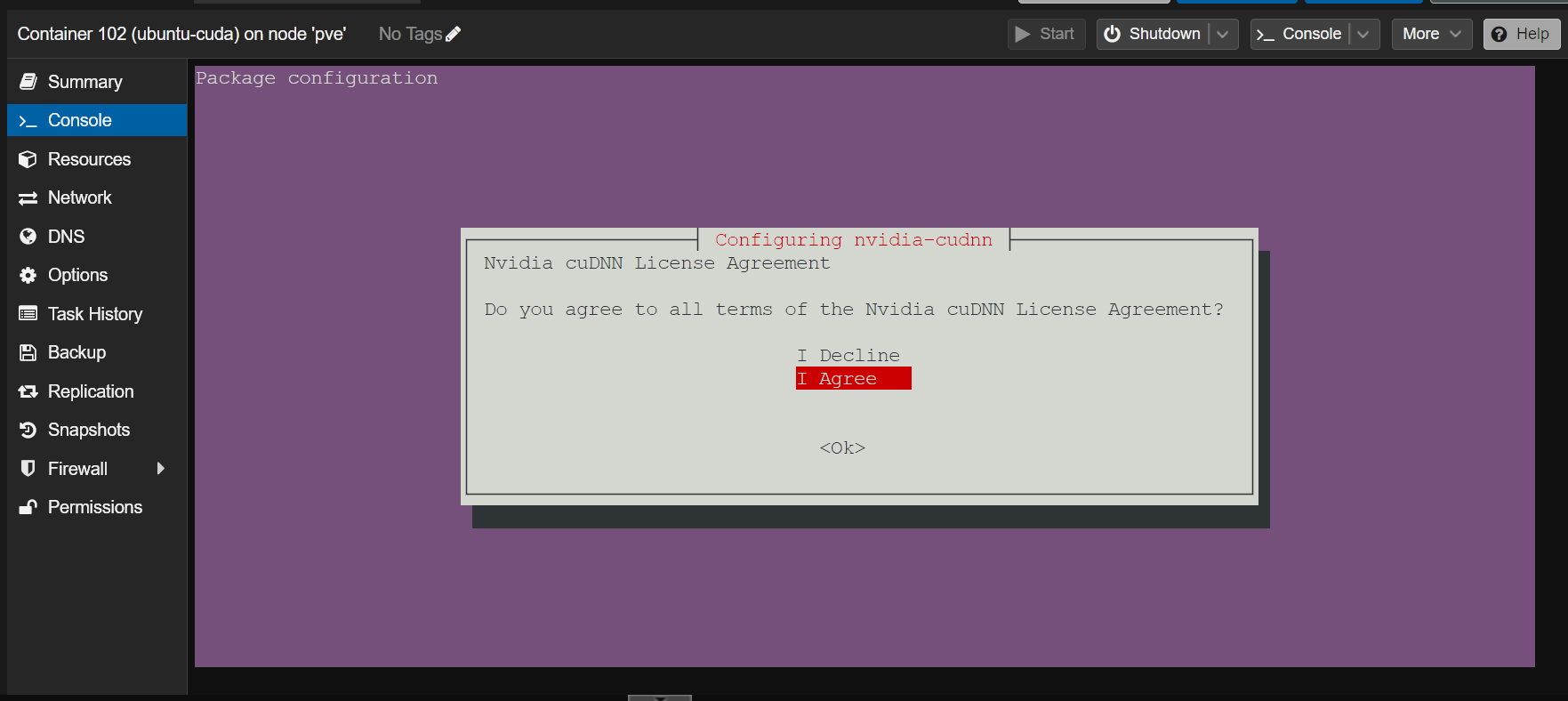

Once you see this window, select “OK” and press <Enter>.

Select “I Agree” and press <Enter>.

The installation should continue.

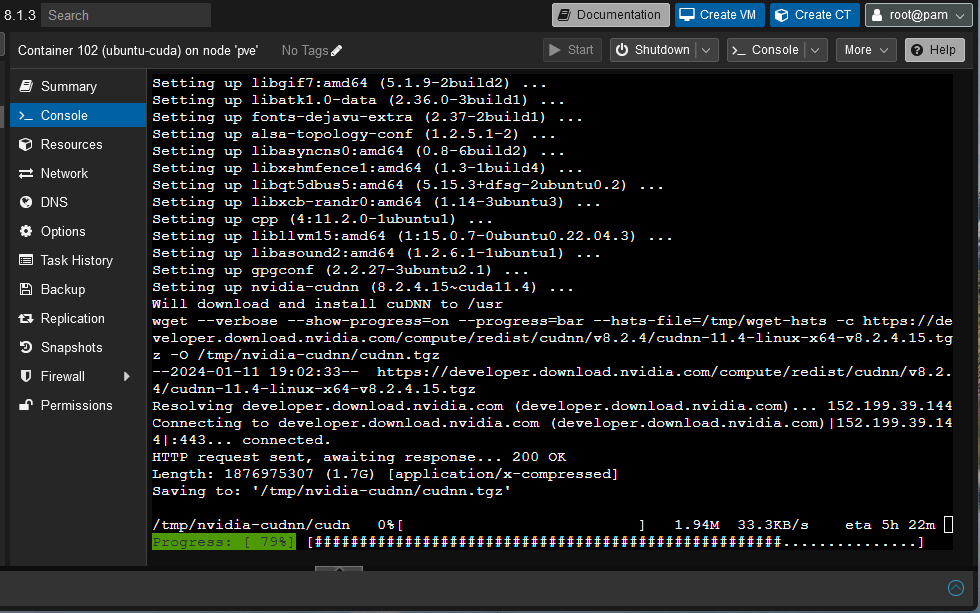

The installer is downloading the NVIDIA cuDNN library archive from NVIDIA. It’s a big file, so it takes a long time to complete.

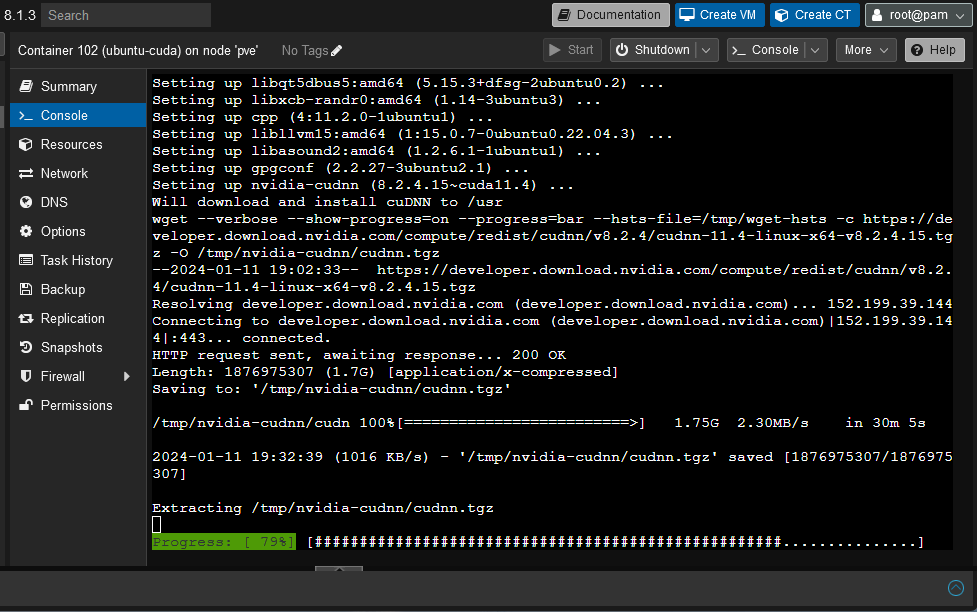

Once the NVIDIA cuDNN library archive is downloaded, the installation should continue as usual.

At this point, NVIDIA CUDA and cuDNN should be installed on the Ubuntu 22.04 LTS Proxmox VE 8 LXC container.

Checking If the NVIDIA CUDA Acceleration Is Working on the Proxmox VE 8 LXC Container

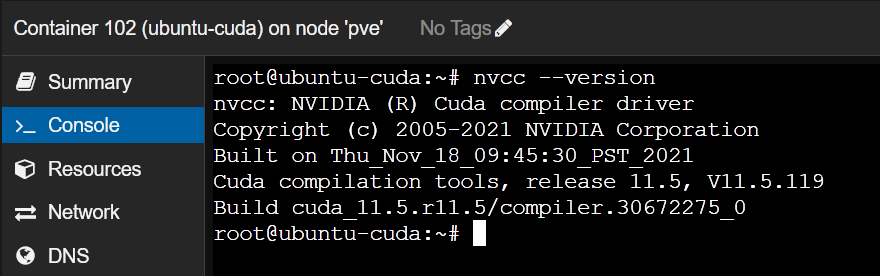

To verify whether NVIDIA CUDA is installed correctly, check if the “nvcc” command is available on the Proxmox VE 8 container as follows:

As you can see, we have NVIDIA CUDA 11.5 installed on our Proxmox VE 8 container.

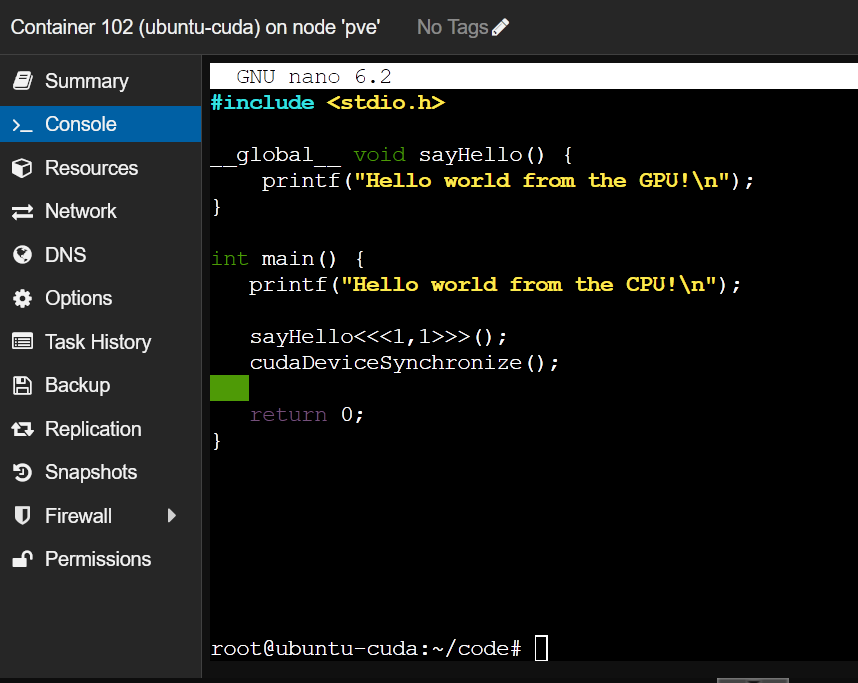

Now, let’s write, compile, and run a simple CUDA C program and see if everything is working as expected.

First, create a “~/code” project directory on the Proxmox VE 8 container to keep the files organized.

Navigate to the “~/code” project directory as follows:

Create a new file like “hello.cu” in the “~/code” directory of the Proxmox VE 8 container and open it with the nano text editor:

Type in the following lines of code in the “hello.cu” file:

__global__ void sayHello() {

printf("Hello world from the GPU!\n");

}

int main() {

printf("Hello world from the CPU!\n");

sayHello<<1,1>>();

cudaDeviceSynchronize();

return 0;

}

Once you’re done, press <Ctrl> + X followed by “Y” and <Enter> to save the “hello.cu” file.

To compile the “hello.cu” CUDA program on the Proxmox VE 8 container, run the following commands:

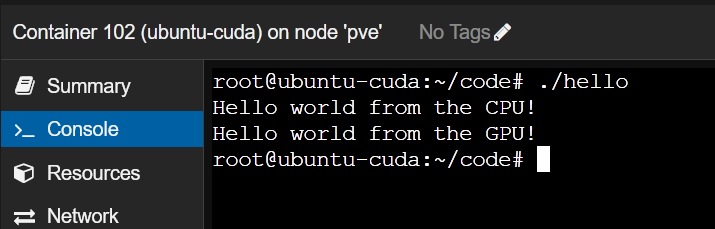

Now, you can run the “hello” CUDA program on the Proxmox VE 8 container as follows:

If the Proxmox VE 8 container can use the NVIDIA GPU for NVIDIA CUDA acceleration, the program will print two lines as shown in the following screenshot.

If the NVIDIA GPU is not accessible from the Proxmox VE 8 container, the program will print only the first line which is “Hello world from the CPU!”, not the second line.

Conclusion

In this article, we showed you how to passthrough an NVIDIA GPU from the Proxmox VE 8 host to a Proxmox VE 8 LXC container. We also showed you how to install the same version of the NVIDIA GPU drivers on the Proxmox VE 8 container as the Proxmox VE host. Finally, we showed you how to install NVIDIA CUDA and NVIDIA cuDNN on an Ubuntu 22.04 LTS Proxmox VE 8 container and compile and run a simple NVIDIA CUDA program on the Proxmox VE 8 container.